Will my staff actually use it?

Every owner has a story about a training tool nobody opened. Here is why most fail at adoption and what changes when the tool meets staff where they already are.

Eamonn Best

Founder, Lattify · May 19, 2026

Every owner I talk to has a version of the same story. They bought Trainual three years ago. The owner watched the welcome video herself. Two staff watched it under instruction. Nobody has opened it since. The login sits on the manager's laptop, forgotten. The subscription renews every month and the owner has been meaning to cancel for fourteen months. It's still on the credit card statement.

When that owner hears about a new training tool, her first response is "my staff won't use it." She's right to be skeptical. She has direct evidence that the previous tool sat unused for three years. The £29 a month renewal is sitting in her bookkeeping right now.

I want to take that objection seriously. It's the most common one I hear, and it's the most legitimate.

Why the skepticism is earned

The Trainual story is common. It's the modal outcome for SMB training tools. Adults at work guess, text a senior, or push the problem to tomorrow rather than open a separate app to read a procedure. The app the owner paid for sits on their phone unopened, or on the back-of-house laptop unloaded.

The mechanism is consistent across tools.

Trainual, the SMB training platform with the biggest brand recognition in this category, is built around manual writing by the owner and a wiki-style interface for staff to consult. Once the owner has written the content (assuming she actually did, which most owners I talk to admit they didn't), staff are expected to open the app, browse to the relevant page, and read. Adults at work skip that step.

Wiki tools (the kind tech companies use internally) ask staff to browse a structured page tree to find what they need. Browsing fails the moment the staff member is mid-shift with a queue behind her.

YouTube playlists fail differently. The senior records a five-minute walkthrough. The new starter watches it on day one. Two weeks later she needs step 4. She has to scrub through the whole video again. She gives up and guesses.

Short screen recordings shared in WhatsApp fail for a different reason again. The senior sends a quick walkthrough on a Tuesday. The new starter who needs it on Friday cannot find it. The owner has lost the link too. The recording is in some thread somewhere. The new starter never finds it.

Five tools, five failure modes, one common root. Each tool requires the staff member to open a separate app, navigate to the right place, and consume content at the moment she needs an answer. That sequence has too much friction. Staff give up.

The owner who watched her training tool sit unused is reading her evidence correctly. The tool failed.

What actually drives tool usage

Two behaviours every adult with a smartphone already does, multiple times a day, without thinking about it.

Google. Type a question, get an answer. Three seconds. Plain English in, the result in front of you. The user types in the familiar search bar and reads. Friction is zero.

ChatGPT. Ask in plain language, get a contextual response. She speaks naturally and the system understands. Friction is zero.

These behaviours work because the friction is zero. The tool meets the user where she already is. Every adult with a smartphone already searches Google and asks ChatGPT. The behaviour is universal.

Training tools that ask staff to learn a different interaction model start from a behavioural deficit. The staff member has to decide to open a separate app instead of using the search bar she already uses. Most stick with their default.

The tools that achieved mass consumer adoption (Google, ChatGPT, WhatsApp, Instagram) share a property. They require zero behavioural change at the moment of use. Type into a familiar box, send a message, scroll a feed. The action is already wired into the user's hand.

Training tools have historically required four steps at the moment of need. Stop what you're doing, open a different app, learn its navigation, find your content. Every step is a chance to give up. Most staff give up at step one.

What I built to do this differently

That's why I built Lattify with a different consumption mechanic. Three things in particular.

Guides arrive before they're needed. When a new starter signs her contract, the relevant guides land on her phone or the venue tablet the next morning. She watches them before her first shift because completion is tracked and the owner sees who watched what. Day one she walks in with a foothold. The owner has stopped relying on her to go looking for the content.

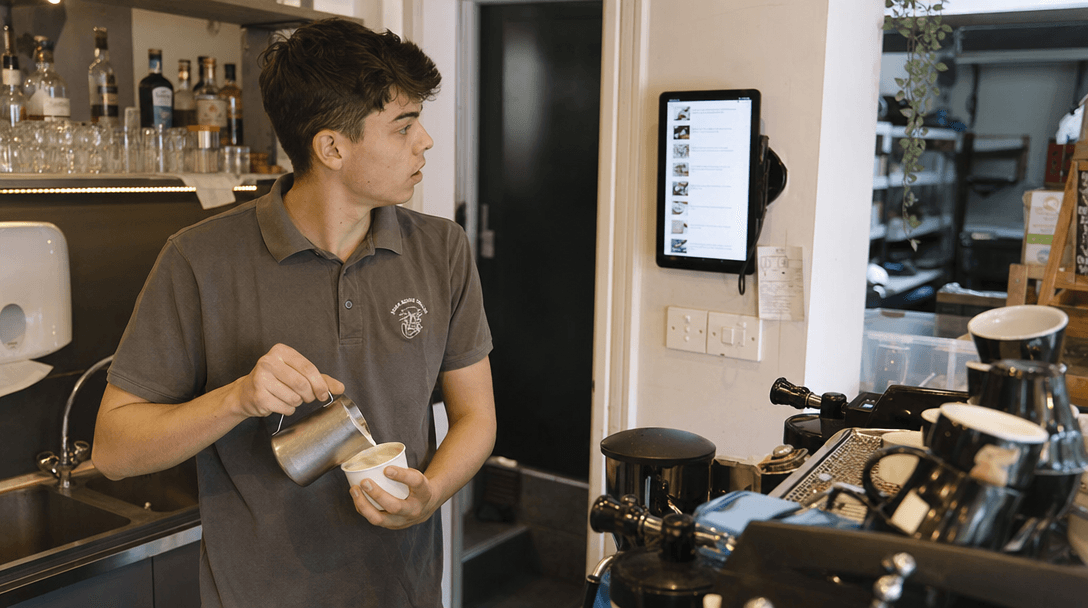

Search replaces menus. When a staff member needs an answer mid-shift, she types her question on her phone or the back-of-house tablet. "How do I do a refund." The answer comes back from the operator's content, the videos and guides the owner has captured for her own venue. The staff member can ignore which guide it's in. She uses her phone the way she already uses it. Type the question, get the answer, get back to the shift.

Take a worked example. Saturday at 7pm, a customer at the bar wants a refund on a card payment from earlier. The card declined for the second item, the bill was split, and the staff member has done this twice but with the senior watching. She types "how do I refund a split card payment" into her phone. The answer comes back from the operator's own POS procedure, with the screenshot at step 3 and the manager-PIN reminder. She does the refund. The customer leaves happy. The senior, who's on prep, never had to come over.

The AI advisor answers mid-task questions. When a staff member is stuck partway through a procedure, the advisor answers grounded in the operator's specific content. The staff member experiences this as asking her phone a question and getting an answer that's right for her venue. The mental model is ChatGPT meets Google for the operator's own business.

Different example. A new starter on a Wednesday afternoon is closing the kitchen. She's at step 6 of the close, which involves the gas isolator behind the fryer. She types "is the gas isolator on the left or right side." The advisor answers from her venue's close guide, names the specific isolator, and reminds her to log the time in the closing checklist. Forty seconds, and she's back to the close. The senior stayed at her station, the owner stayed at home.

For staff who don't carry personal phones at work, the same content runs on a venue tablet at the back of house or by the till. Same library, same search, same advisor. The owner picks the device. The mechanic is the same.

The shift in consumption is the part that matters. The behavioural cost at the moment of need is the same as Googling something. Staff search their phone for a work question and get an answer that's right for their venue.

Your half of the work

The tool is one half. The other half is you, the owner, deciding that the new norm is search-first.

The most common failure mode I see when an operator pilots a new tool is the owner answering the 3am pin-code call. Lattify has the pin code. The staff member has Lattify on her phone. The owner picks up anyway. Two weeks in, the staff member has learned that calling the owner is faster than searching. Adoption flatlines and the owner concludes "they won't use it."

The fix takes practice. When the call comes in, the answer is "have you searched it on Lattify." If she hasn't, she searches it, finds the answer, and the call ends. The next time, she goes straight to the search. You train the behaviour in by redirecting.

This is the part most operators find harder than the tech. The owner has spent years being the answer to every question. Letting the search bar take the question instead requires the owner to actively redirect the team to the tool for the first two or three weeks. After that the behaviour is in, and the calls stop coming.

A few things that help in the first month. On day one, walk the team through one search together so they see the result come back from your own venue's content. When you get a call or a text with a question that's findable, send it back with "have you tried searching it." If they haven't, ask them to and come back only if the answer is wrong. Watch the adoption dashboard once a week. If one staff member's searches are zero after a fortnight, that's a coaching conversation.

You get out what you put in. The owners I see succeed with Lattify treat the first month as a coaching exercise. The owners who treat it as "I bought the tool, now they should use it" get the same result they got with their last tool.

Honest filter at the end of 30 days. If you've coached the team, redirected the questions back to the tool, and the dashboard still shows zero searches and zero guide views, the issue is the workplace. The team that's checked out for reasons that go beyond software is showing you something true about your operation. No training tool fixes a culture problem. Most pilots show real engagement once the friction is low and the owner sets the expectation.

The 30-day pilot

The only way to know whether it'll work in any specific operation is to run it. The pilot is free for 30 days. Adoption data is live in the owner's dashboard from day one. Which guides got watched. Which questions got searched. Which staff member asked the advisor what, when, and how often.

The data tells the owner whether the team engaged. Live numbers on actual usage, broken down by person, day by day.

Most operators who said "they won't use it" before the pilot found their team did engage during the pilot. The team engaged because the tool worked the way they already used their phones. The senior asked the advisor more questions than the new starter did, because the senior had more edge cases to look up.

Close

The owner who bought Trainual three years ago and watched it sit unused has a real reason to be skeptical. The previous tool failed. Her evidence is real. The way to find out whether the new tool fails the same way is to run the pilot and read the adoption data on day 30.

If any of this sounded familiar, we built Lattify for exactly this problem.

Join the Waitlist